by Doug Kreitzberg | Apr 30, 2020 | Coronavirus, Cybersecurity, Human Factor

Your organization’s cybersecurity team is on edge in the best of times. The bad guys are always out there and, like offensive lineman in American Football who are only noticed when they commit a penalty, cybersecurity personal are usually noticed only when something goes wrong. Now, as the game has changed, the quick transition to work from home, combined with the plethora of COVID-19 scams, phishing, and malware drowning the cybersecurity threat intel sources—not to mention the isolation—may leave your team at a chronically high stress level. And cybersecurity is far more than just your technical safeguards. At the end of the day, the stress your team feels could lead them to put their focus in the wrong place and let their guard down.

Here’s what you can do about it

- Incorporate cybersecurity as a part of your overall business strategy process – now is the time to recognize cybersecurity as a key part of the organization’s strategy and that enables you to drive your mission forward.

- Be a part of the cybersecurity planning process – be active, listen, and understand how your team is handling this.

- Leverage your bully pulpit – communicate to the staff about the key areas your cybersecurity team is focused on and the role they are playing to keep the organization secure while everyone is working from home.

- Check in – take the time to just check in and see how they are doing. A little goes a long way.

The truth is, when it comes to cybersecurity, your first and most effective line of defense is not your firewall or encryption protocol. It’s the people that form a team dedicated to protecting your organization. Working from home poses unique cybersecurity challenges, and it’s up to you to make sure your team is given the attention they need to do their job well.

by Doug Kreitzberg | Apr 29, 2020 | Coronavirus, Privacy

As the COVID-19 pandemic continues, the world has turned to the tech industry to help mitigate the spread of the virus and, eventually, help transition out of lockdown. Earlier this month, Apple and Google announced that they are working together to build contact-tracing technology that will automatically notify users if they have been in proximity to someone who has tested positive for COVID-19. However, reports show that there is a severe lack of evidence to show that these technologies can accurately report infection data. Additionally, the question arises as to the efficacy of these types of apps to effectively assist the marginal populations where the disease seems to have the largest impact. Combined with the invasion of privacy that this involves, the U.S. needs to more seriously interrogate whether or not the potential rewards of app-based contact tracing outweigh the obvious—and potentially long term— risks involved.

First among the concerns is the potential for the information collected to be used to identify and target individuals. For example, in South Korea, some have used the information collected through digital contact tracing to dox and harass infected individuals online. Some experts fear that the collected data could also be used as a surveillance system to restrict people’s movement through monitored quarantine, “effectively subjecting them to home confinement without trial, appeal or any semblance of due process.” Such tactics have already been used in Israel.

Apple and Google have taken some steps to mitigate the concerns over privacy, claiming they are developing their contact tracing tools with user privacy in mind. According to Apple, the tool will be opt-in, meaning contact tracing is turned off by default on all phones. They have also enhanced their encryption technology to ensure that any information collected by the tool cannot be used to identify users, and promise to dismantle the entire system once the crisis is over.

Risk

Apple and Google are not using the phrase “contact tracing” for their tool, instead branding it as “exposure notification.” However, changing the name to sound less invasive doesn’t do anything to ensure privacy. And despite the steps Apple and Google are taking to make their tool more private, there are still serious short and long term privacy risks involved.

In a letter sent to Apple and Google, Senator Josh Hawley warns that the impact this technology could have on privacy “raises serious concern.” Despite the steps the companies have taken to anonymize the data, Senator Hawley points out that by comparing de-identified data with other data sets, individuals can be re-identified with ease. This could potentially create an “extraordinarily precise mechanism for surveillance.”

Senator Hawley also questions Apple and Google’s commitment to delete the program after the crisis comes to an end. Many privacy experts have echoed these concerns, worrying what impact these expanded surveillance systems will have in the long term. There is plenty of precedent to suggest that relaxing privacy expectations now will change individual rights far into the future. The “temporary” surveillance program enacted after 9/11, for example, is still in effect today and was even renewed last month by the Senate.

Reward?

Contact tracing is often heralded as a successful method to limit the spread of a virus. However, a review published by a UK-based research institute shows that there is simply not enough evidence to be confident in the effectiveness of using technology to conduct contact tracing. The report highlights the technical limitations involved in accurately detecting contact and distance. Because of these limitations, this technology might lead to a high number of false positives and negatives. What’s more, app-based contact tracing is inherently vulnerable to fraud and cyberattack. The report specifically worries about the potential for “people using multiple devices, false reports of infection, [and] denial of service attacks by adversarial actors.”

Technical limitations aside, the effectiveness of digital contact tracing also requires both large compliance rate and a high level of public trust and confidence in this technology. Nothing suggests Apple and Google can guarantee either of these requirements. The lack of evidence showing the effectiveness of digital contact tracing puts into question the use of such technology at the cost serious privacy risks to individuals.

If we want to appropriately engage technology, we should determine the scope of the problem with an eye towards assisting the most vulnerable populations first and at the same time ensure that the perceived outcomes can be obtained in a privacy perserving manner. Governments need to lay out strict plans for oversight and regulation, coupled with independent review. Before comprising individual rights and privacy, the U.S. needs to thoroughly asses the effectiveness of this technology while implementing strict and enforceable safeguards to limit the scope and length of the program. Absent that, any further intrusion into our lives, especially if the technology is not effective, will be irreversible. In this case, the cure may well be worse than the disease.

by Doug Kreitzberg | Apr 23, 2020 | Coronavirus, Data Breach

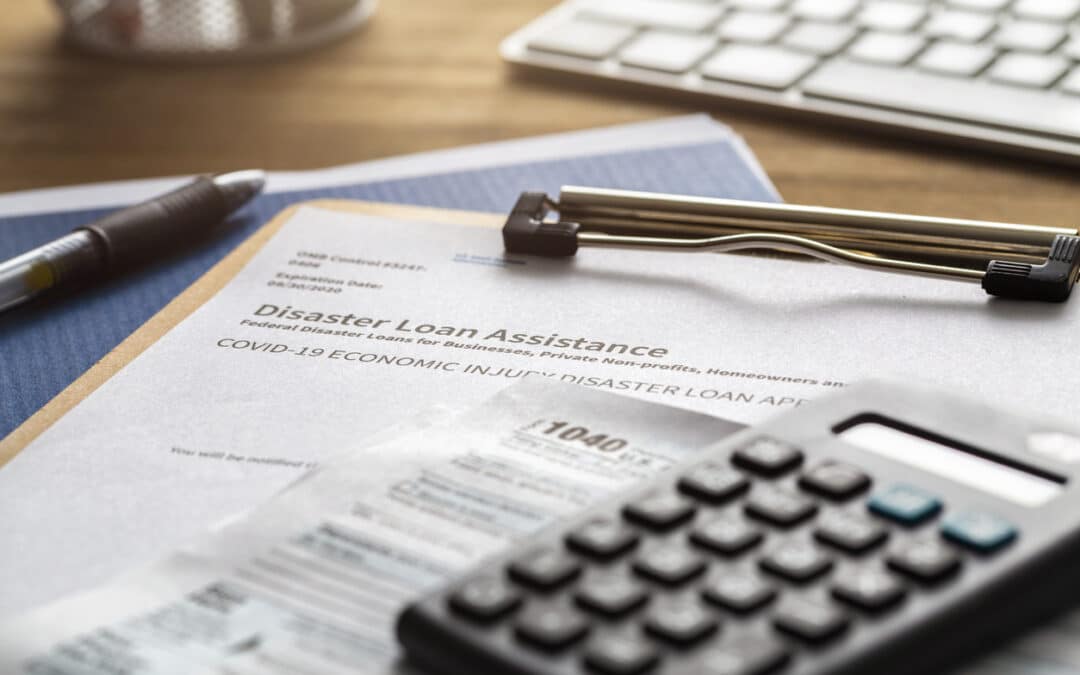

This week, reports surfaced that the Small Business Association’s COVID-19 loan program experienced an unintentional data breach last month, leaving the personal information of up to 8,000 applicants temporarily exposed. This is just the latest in a long line of COVID-19 cyber-attacks and exposures since the pandemic began.

The effected program is the SBA’s long-standing Economic Injury Disaster Loan program (EIDL), which congress recently expanded to help small businesses effected by the COVID-19 crisis. The EIDL is separate from the new Paycheck Protection Program, which is also run by the SBA.

According to a letter sent to affected applicants, on March 25th the SBA discovered that the application system exposed personal information to other applicants using the system. The information potentially exposed include names, addresses, phone numbers, birth dates, email addresses, citizenship status, insurance information, and even social security numbers of applicants

According to the SBA, upon discovering the issues they “immediately disabled the impacted portion of the website, addressed the issue, and relaunched the application portal.” All businesses affected by the COVID-19 loan program breach were eventually notified by the SBA and offered a year of free credit monitoring.

A number of recent examples show that the severe economic impact of the pandemic has left the SBA scrambling. Typically, the SBA is meant to issue funds within three days of receiving an application. However, with more than 3 million applications flooding in, some have had to wait weeks for relief.

The unprecedented number of applications filed, coupled with the fact the SBA is smallest major federal agency — suffering a 11% funding cut in the last budget proposal — likely contributed to the accidental exposure of applicant data. However, whether accidental or not, a data breach is still a data breach. It’s important that all organizations take the time to ensure their systems and data remain secure, and that mistakes do not lead to more work and confusing during a time of crisis.

by Doug Kreitzberg | Apr 22, 2020 | Coronavirus, Data Breach

The current onslaught of cyberattacks related to the COVID-19 pandemic continued this week. Tuesday night, reports surfaced that attackers publicized over 25,000 emails and passwords from the World Health Organization, The Gates Foundation, and other organizations working to fight the current COVID-19 pandemic. What’s more, this new data dump starkly shows how easily data breaches related to COVID-19 can fuel disinformation campaigns.

The sensitive information was initially posted online over the course of Sunday and Monday, and quickly spread to various corners of the internet often frequently by right-wing extremists. These groups rapidly used the breached data to create widespread harassment and disinformation campaigns about the COVID-19 pandemic. One such group posted the emails and passwords to their twitter page and pushed a conspiracy theory that the information “confirmed that SARS-Co-V-2 was in fact artificially spliced with HIV.”

A significant portion of the data may actually be out of date and from previous data breaches. In a statement to The Washington Post, The Gates Foundation said they “don’t currently have an indication of a data breach at the foundation.” Reporting by Motherboard also found that much of the data involved matches information stolen in previous data breaches. This indicates that at least some of the passwords circulating are not linked to the organizations’ internal systems unless employees are reusing passwords.

However, some of the information does appear to be authentic. Cybersecurity expert Robert Potter was able to use some of the data to access WHO’s internal computer systems and said that the information appeared to be linked to a 2016 breach of WHO’s network. Potter also noted a trend of disturbingly poor password security at WHO. “Forty-eight people have ‘password’ as their password,” while others simply used their own first names or “changeme.”

Consequences

Whether the majority of the information is accurate or not, it does not change the fact that the alleged breach has successfully fueled more disinformation campaigns about the COVID-19 pandemic. In the past few weeks, many right-wing extremist groups have used disinformation about the pandemic to spread further fear, confusion in the hopes of seeding more chaos.

This episode starkly shows how data breaches can cause damage beyond the exposure of sensitive information. They can also be weaponized to spread disinformation and even lead to political attacks.

by Doug Kreitzberg | Apr 21, 2020 | Cybersecurity, Human Factor, Risk Culture

Regardless of your business or your personal situation, it is hard to imagine that you have not been impacted by COVID. Among other things, it has exposed how vulnerable we are personally. How vulnerable our company is. How vulnerable our communities are.

And these vulnerabilities can create a sense of anxiety, which can build on itself, leaving feeling us helpless.

Perhaps the single most important thing we can do when we are vulnerable is to connect. To communicate. To reach out to others. If we do nothing but isolate, the vulnerabilities expose and consume us.

Cybersecurity professionals deal with vulnerabilities all the time. Often these individuals work as a group separately or perhaps communicating with other IT members. Unfortunately, apart from compliance audit reports or token security awareness programming, cybersecurity is rarely communicated and integrated into the overall culture of the business. How many times do security professionals say of corporate users and leadership, “They just don’t understand” and c-suite, marketing or other department users say with regards to cybersecurity, “They just don’t understand.” Imagine the understanding that could occur if everyone began to lean in and communicate about these issues as one team.

Just as during these times, a key way to address vulnerabilities in your systems is by connecting and communicating across channels. The more the IT and cybersecurity team is engaging with business leaders and staff and other stakeholders, the stronger the organizational culture will be to mitigate vulnerabilities and build resilience.