by Doug Kreitzberg | Oct 12, 2021 | Phishing

Spotting phish is not always easy. Sure, there are some phish you get that are easy to spot, but over the years scammers have worked hard to create more convincing emails. By more convincingly spoofing common emails we see every day in our inbox and by leveraging cognitive biases we all have, more sophisticated phishing emails can be pretty difficult to catch. In a recently published research paper, Rick Walsh, professor of Media and Information at Michigan State University, takes a closer look at how IT experts spot the phish they get and highlights the ways even the experts can fall prey to sophisticated phishing campaigns.

How Experts Spot Phish

Interviewing 21 IT experts, Walsh found 3 common steps they use to spot phish that come into their inbox.

Step 1: Sense Making

First, experts simply try to understand why they are receiving this email and how it relates to other things in their life. They look for things that seem to be off about the email, noting discrepancies like typos or things they know to be untrue. They also try to understand what the email is trying to get them to do. If they see a lot of discrepancies and are being urged to take quick action, they move on to the next step.

Step 2: Suspicion

In this step, the experts move away from trying to make sense of the email and starting asking themselves if this email is legitimate or not. To determine this, they start looking for evidence, like hovering over the link to see where it directs them and checking the sender’s name and address. After collecting evidence, they move to the final step.

Step 3: Decision

By this step, the experts have concluded whether or not the email is legit or not. If they believe it’s a phish, they now take some form of action. In some cases, they simply deleted the email, others however took proactive steps like reporting the phish or alerting other employees of the potential scam.

Even The Experts Can Fail

After discussing the ways experts typically spot phish, Walsh highlights a number of ways even the experts could mess up when spotting phish. Here are 3 of the most important failures Walsh highlights.

1. Automation Failure

Automation failure happens when we’re not engaging in enough sense making. We all get a lot of emails every day, so sometimes we go into auto-pilot as we go through our inbox. However, this means we’re not engaging in enough sensemaking. It’s therefore essential to take a moment to pause before opening our email and make sure we are in acting with awareness.

2. Accumulation Failure

Accumulation failure refers to the process of identifying discrepancies in emails but only looking at them one by one instead of as a whole. It can be easy to find any number of explanations for a discrepancy we see, so if you’re only thinking about each of these discrepancies in isolation, you may not become suspicious. However, if you start to add up all the issues your seeing in the email, it becomes a lot easier to tell when you need to be suspicious of what you’re seeing.

3. Evidence Failure

Lastly, evidence failure means when you make the wrong judgment on the evidence you see in an email. If, for example, you hover over the link in the email and it shows you a spoofed link that looks similar to a common website you use, you may not realize the link is bad.

What’s important about this research is, when it comes to social engineering, even the experts can get tripped up. It’s therefore vital that security awareness training goes beyond simply teaching you what to look for in an email. Awareness training should also teach you how to spot who an email plays on your own cognitive bias and the ways we sometimes fail to take account of important information when we look at our inbox.

by Doug Kreitzberg | Dec 9, 2020 | Business Email Compromise, Data Breach, Disinformation, Human Factor, Phishing

Yesterday, I received an email from a business acquaintance that included an invoice. I knew this person and his business but did not recall him every doing anything for me that would necessitate a payment. I called him to about the email and he said that his account had been indeed hacked and those emails were not from him. What occurred was an example of business email compromise (BEC) using stolen credentials.

Typically, BEC is a form of cyber attack where attackers create fake emails that impersonate executives in order to convince employees to send money to a bank account controlled by the bad guys. According to the FBI, BEC is the costliest form of cyber attack, scamming business out of $1.7 billion in 2019 alone. One reason these attacks are becoming so successful is because attackers are upping their game: instead of creating fake email address that look like a CEO or a vendor, attackers are now learning to steal login info to make their scams that much more convincing.

By compromising credentials, BEC attackers have opened up multiple new avenues to carry out their attack and increase the change of success. Among all the ways compromised credentials can be used for BEC attacks, here are 3 that every business should know about.

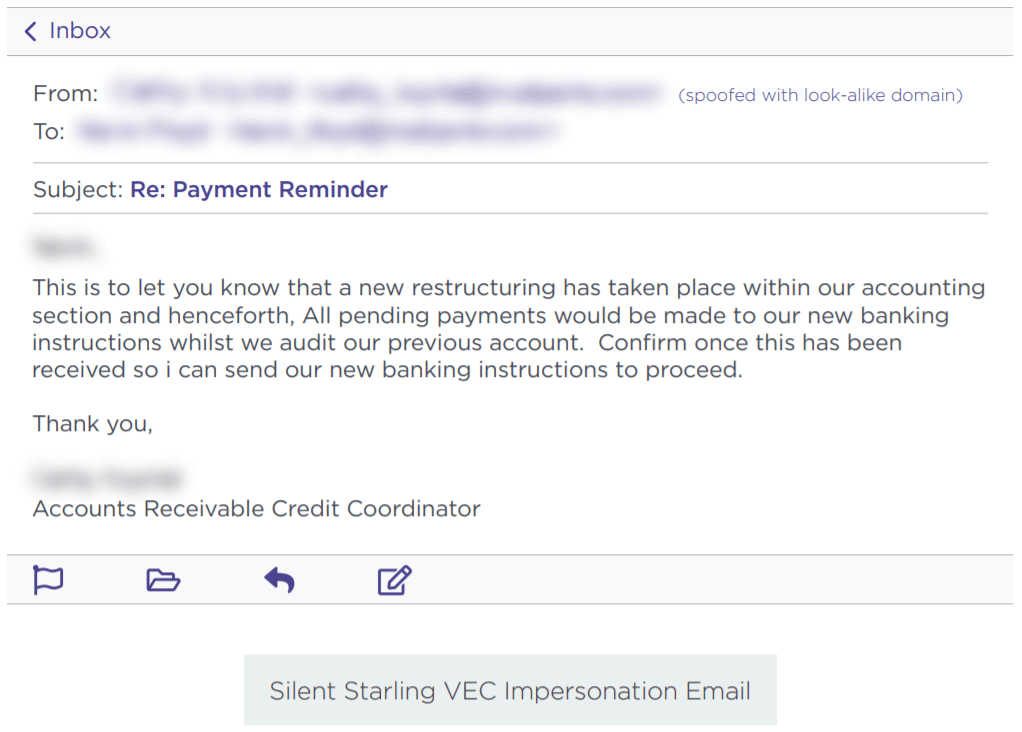

Vendor Email Compromise

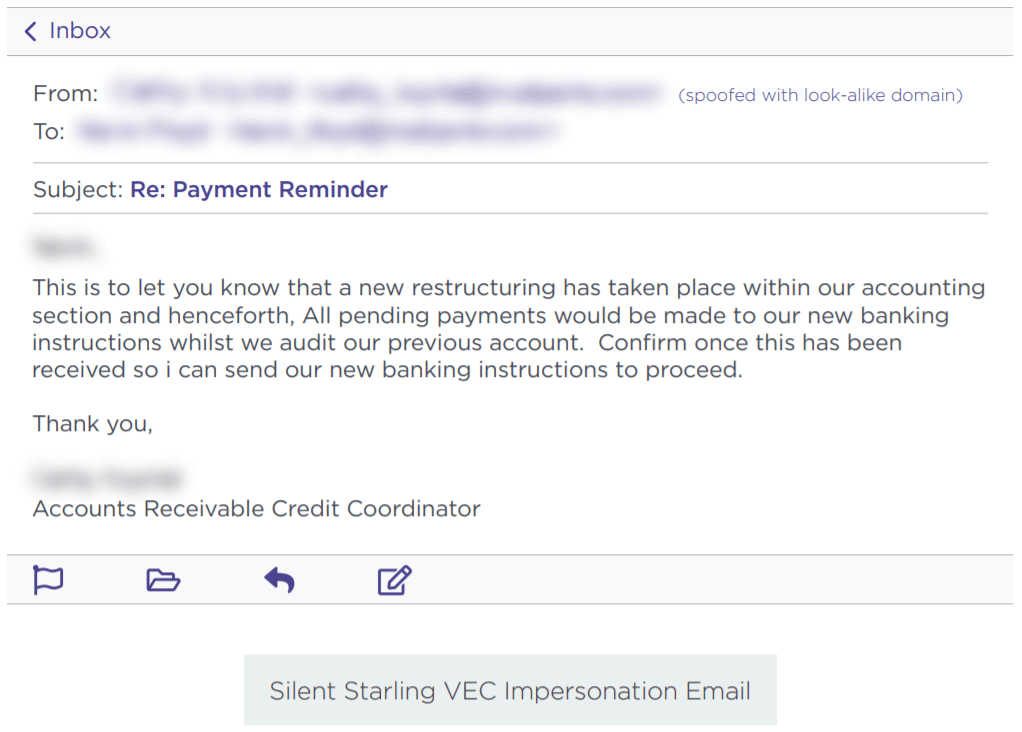

One way BEC attackers can use compromised credentials has been called vendor email compromise. The name, however, is a little misleading, because vendors aren’t actually the target of the attack. Instead, they are the means to carry an attack out on a business. Essentially, BEC attackers will compromise the email credentials of an employee at the billing department of a vendor, then send invoices from that email to businesses requesting they make payment to a bank account controlled by the attackers.

Source: Agari

Inside Jobs

Another way attackers can use compromised credentials to carry out BEC scams is to use the credentials of someone in the finance or accounting department of an organizations to make payment requests to other employees and suppliers. By using the actual email of someone within the company, payments requests look far more legitimate and increase the change that the scam will succeed.

What’s more, attackers can use compromised credentials of someone in the billing department to even target customers for payment. Of course, if the customers make a payment, it goes to the attackers and not to the company they think they are paying. This is a new method of BEC, but one that is gaining steam. In a press release earlier this year, the FBI warned of the use of compromised credentials in BEC to target customers.

Advanced Intel Gathering

Another method to use compromised credentials for BEC doesn’t even involve using the compromised account to request payments. Instead, attackers will gain access to the email account of an employee in the finance department and simply gather information. With enough time, attackers can study who the business releases funds to, how often, and what the payment requests look like. With all of this information under their belt, attackers will then create a near-perfect impersonation of the entity requesting payment and send the request exactly when the business is expecting it.

Attackers have even figured out a way to retain access to employee’s emails after they’ve been locked out of the account. Once they’ve gained access to an employee’s inbox, attackers will often set the account to auto-forward any emails the employee receives to an account controlled by the attacker. That way, if the employee changes their password, the attacker can still view every message the employee receives.

What you can do

All three of these emerging attack methods attack should make businesses realize that BEC is a real and dangerous threat. It can be far harder to detect a BEC attack when the attackers are sending emails from a real address or using insider information from compromised credentials to expertly impersonate a vendor. Attackers can gain access to these credentials in a number of ways. First, through initial phishing attacks designed to capture employee credentials. Earlier this year, for example, attackers launched a spear phishing campaign to gather the credentials of finance executives‘ Microsoft 365 accounts in order to then carry out a BEC attack. Attackers can also pay for credentials on the dark web that were stolen in past data breaches. Even though these breaches often involve credentials of employees’ personal accounts, if an employee uses the same login info for every account, then attackers will have easy access to carry out their next BEC scam.

While the use of compromised credentials can make BEC harder to detect, there are a number of things organizations can do to protect themselves. First, businesses should ensure all employees—and vendors!—are properly trained in spotting and identifying phishing attacks. Second, organizations should require proper password management is for all users. Employees should use different credentials for every account, and multi-factor authentication should be enabled for vulnerable accounts such as email. Lastly, organization should disable or limit the auto-forwarding to prevent attackers from continuing to capture emails received by a targeted employee.

Businesses should also ensure employees in the finance department receive additional BEC training. A report earlier this year found an 87% increase in BEC attacks targeting employees in finance departments. Ensuring employees in the finance department know, for example, to confirm any changes to a vendor’s bank information before releasing funds, is key to protecting your organization from falling prey to the increasingly sophisticated BEC landscape.

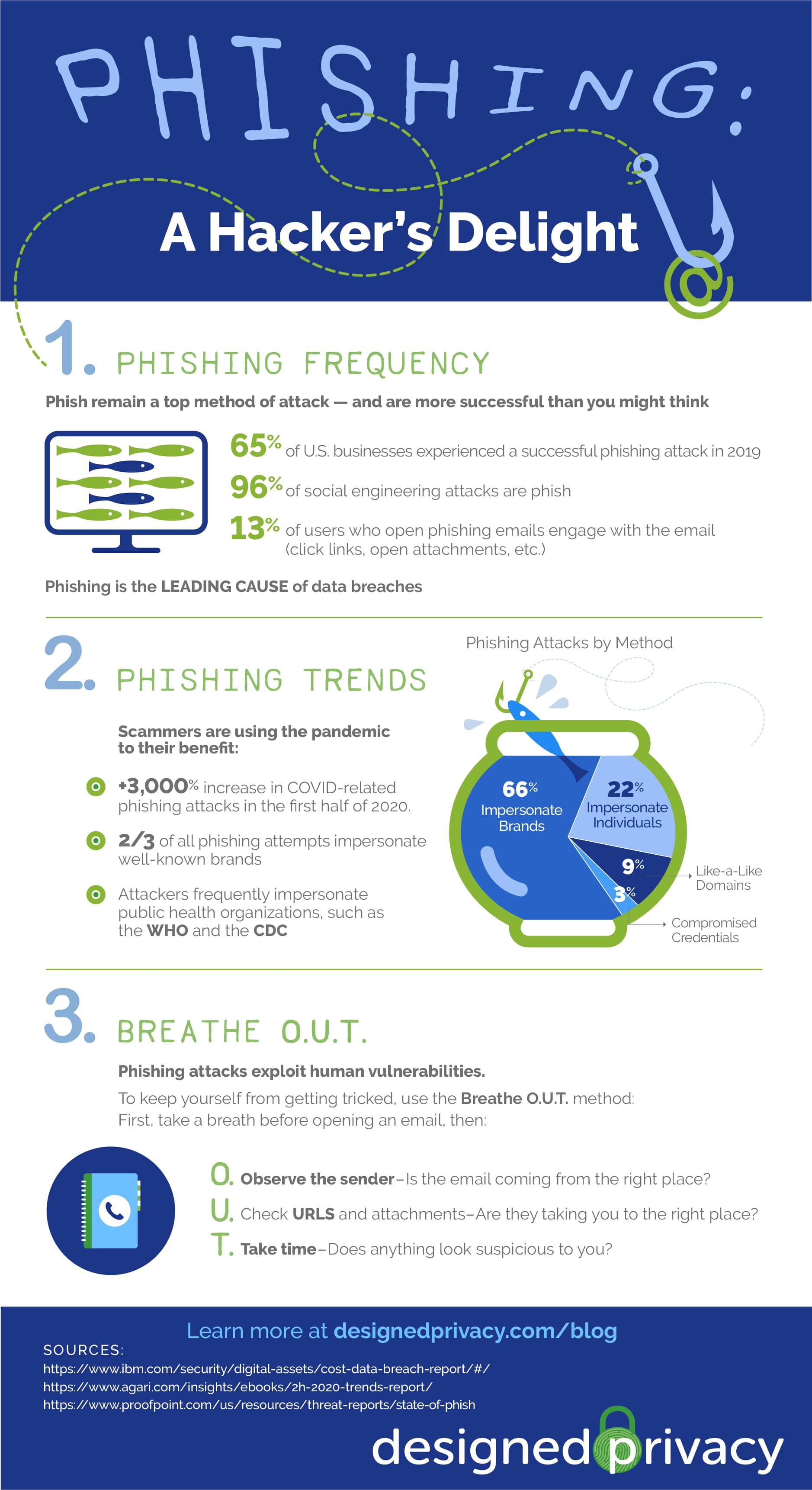

by Doug Kreitzberg | Oct 27, 2020 | Phishing

by Doug Kreitzberg | Aug 19, 2020 | Artificial Intelligence, Disinformation, Human Factor, Phishing, social engineering

Earlier this month, a study by the University College London identified the top 20 security issues and crimes likely to be carried out with the use of artificial intelligence in the near future. Experts then ranked the list of future AI crimes by the potential risk associated with each crime. While some of the crimes are what you might expect to see in a movie — such as autonomous drone attacks or using driverless cars as a weapon — it turns out 4 out of the 6 crimes that are of highest concern are less glamorous, and instead focused on exploiting human vulnerabilities and bias’.

Here are the top 4 human-factored AI threats:

Deepfakes

The ability for AI to fabricate visual and audio evidence, commonly called deepfakes, is the overall most concerning threat. The study warns that the use of deepfakes will “exploit people’s implicit trust in these media.” The concern is not only related to the use of AI to impersonate public figures, but also the ability to use deepfakes to trick individuals into transferring funds or handing over access to secure systems or sensitive information.

Scalable Spear-Phishing

Other high-risk, human-factored AI threats include scalable spear-phishing attacks. At the moment, phishing emails targeting specific individuals requires time and energy to learn the victims interests and habits. However, AI can expedite this process by rapidly pulling information from social media or impersonating trusted third parties. AI can therefore make spear-phishing more likely to succeed and far easier to deploy on a mass scale.

Mass Blackmail

Similarly, the study warns that AI can be used to harvest a mass information about individuals, identify those most vulnerable to blackmail, then send tailor-crafted threats to each victim. These large-scale blackmail schemes can also use deepfake technology to create fake evidence against those being blackmailed.

Disinformation

Lastly, the study highlights the risk of using AI to author highly convincing disinformation and fake news. Experts warn that AI will be able to learn what type of content will have the highest impact, and generate different versions of one article to be publish by variety of (fake) sources. This tactic can help disinformation spread even faster and make the it seem more believable. Disinformation has already been used to manipulate political events such as elections, and experts fear the scale and believability of AI-generated fake news will only increase the impact disinformation will have in the future.

The results of the study underscore the need to develop systems to identify AI-generated images and communications. However, that might not be enough. According to the study, when it comes to spotting deepfakes, “[c]hanges in citizen behaviour might [ ] be the only effective defence.” With the majority of the highest risk crimes being human-factored threats, focusing on our own ability to understand ourselves and developing behaviors that give us the space to reflect before we react may therefore become to most important tool we have against these threats.

by Doug Kreitzberg | Aug 17, 2020 | Business Email Compromise, Cyber Awareness, Phishing

We understand the risks of having our email credentials compromised. If it happens, we know to change our login information as quickly as possible to ensure whoever got in can’t continue to access our emails. The problem, however, is that there is a very simple way for hackers to continue to access the content of your inbox even after you change your password: auto-forwarding. If someone gains access to your email, they can quickly change your configurations to have every single email sent to your inbox forwarded to the hacker’s personal account as well.

The most immediate concern with unauthorized auto-forwarding is the ability for a hacker to view and steal any sensitive or proprietary information sent to your inbox. However the risks associated with this form of attack have far greater ramifications. By setting up auto-forwarding, phishers can carry out reconnaissance efforts in order to carry out more sophisticated social engineering scams in the long-term.

For example, auto-forwarding can help hackers carry out spear phishing attacks — a form of phishing where the scammer tailors phishing emails to target specific individuals. By learning how the target communicates with others and what type of email they are most likely to respond to, hackers can create far more convincing phish and increase the chance that their attack will be a success.

Bad actors can also utilize auto-forwarding to craft highly-sophisticated business email compromise (BEC) attacks. BEC is a form of social engineering in which a scammer impersonates vendors or bosses in order to trick employees into transfering funds to the wrong place. If the scammer is using auto-forward, they may be able to see specific details about projects or services being carried out and gain a better sense of the formatting, tone, and style of invoices or transfer requests This can then be used to create fake invoices for actual services that require payment.

How to protect yourself from unauthorized auto-forwarding

There are, however, a number of steps you and your organizations can take to prevent hackers from setting up auto-forwarding. The most obvious is to prevent access to your email account in the first place. Multi-factor authentication, for example, places an extra line of defense between the hacker and your inbox. However, every organization should also disable or limit users’ ability to set up auto-forwarding. Some email providers allow organizations to block auto-forward by default. Your IT or security team can then manually enable auto-forwarding for specific employee’s when requested for legitimate reasons and for a defined time period.

When it comes to the risks with auto-fowarding, the point is that the more the hackers can learn about your organizations and your employees, the more convincing their future phishing and BEC attacks will be. By putting safeguards in place that help prevent access to email accounts and block auto-forwarding, you can lower the risk that a bad actor will gain information about your organization and carry out sophisticated social engineering attacks.

by Doug Kreitzberg | Aug 12, 2020 | Cybersecurity, Phishing, social engineering

By now, most people have heard about the hack of high-profile Twitter accounts that took place on July 15th. To carry out the attack, the perpetrators used a social engineering tactic called “vishing” — short for voice phishing — in which attackers use phone calls rather than email or messages to trick individuals into giving out sensitive information. The incident once again highlights the risks associated with human rather than technical vulnerabilities, and shows Twitter’s shortcomings in managing employee access controls.

On the day of the attack, big names like President Barack Obama, Elon Musk, Jeff Bezos , and Joe Biden all tweeted a message asking users to send them bitcoin with the promise of being sent back double the amount. Of course, this turned out to be a scam and the tweets were quickly removed, but not before the hacker received over $100,000 worth of bitcoin.

According to a statement by Twitter, the attackers gained access to the company’s internal systems the same day as the attack. By using “a phone spear phishing attack,” — commonly known as vishing — the scammers tricked lower-level employees into revealing credentials that allowed them access to Twitter’s internal system. This access, however, did not allow the attackers to immediately access user accounts. However, once inside they were able to carry out additional attacks on other employees who did have access to Twitter’s support controls. From there, the hackers had access to every account on Twitter and could make important changes, including changing the email address associated with an account.

While vishing is not the most well known or most frequent form of social engineering attack, the Twitter hack shows just how dangerous it can be. It’s the one type of attack that requires no code, email, or usb device to carry out. However, there are key protections businesses can use, and that should have been in place at Twitter. First among them is to have explicit policies and safeguards for disclosing credentials and wiring funds. Individual employees should not be allowed to give out information on their own — even if they think they are giving it to a trusted colleague. Instead, employees should have to communicate with a third-party within the company who can verify an employee’s identity before sharing credentials.

Secondly, Twitter needed to have stricter access controls in place, throughout all levels of the company. While Twitter claims that “access to [internal] tools is strictly limited and is only granted for valid business reasons,” this was clearly not the case on July 15th. And even though the employees that were initially exploited did not have full access to user accounts, the hackers were able to leverage the limited access they had to then gain even more advanced and detailed permission rights. Businesses should therefore ensure all employees, even with limited access, have the proper cyber awareness training and undergo simulations of various social engineering attacks.

Lastly, when it comes to vishing, it’s important to use techniques similar to those used to spot other types of scams. When getting a call, the first thing to do is simply take a breathe. This will interrupt automatic thinking and allow you to be more alert. You also need to make sure you are actually talking to who you think you are. Scammer’s can make a call look like it’s coming from a trusted number, so even if you get a call from someone in your contacts it could still be a scammer. That’s why it’s important to focus on what the phone call or voicemail is trying to convey. Is it too urgent? Are they probing for sensitive or personal information about you or others? Is it relevant to what you already know? If anything at all seems off, be extra cautious before talking about that could be damaging.

While you may feel comfortable spotting a phishing attack, hackers and scammers are constantly looking for new ways to trick us. And, as the Twitter hack shows, they are very good at what they do. It’s better to be too cautious and assume you are at risk of being scammed, then think it could never happen to you. Because it can.