by Doug Kreitzberg | Nov 19, 2020 | Artificial Intelligence, Coronavirus, Disinformation, Human Factor, Misinformation, Reputation, social engineering, Social Media

By now, most everyone has heard about the threat of misinformation within our political system. At this point, fake news is old news. However, this doesn’t mean the threat is any less dangerous. In fact, over the last few years misinformation has spread beyond the political world and into the private sector. From a fake news story claiming that Coca-Cola was recalling Dasani water because of a deadly parasite in the bottles, to false reports that an Xbox killed a teenager, more and more businesses are facing online misinformation about their brands, damaging the reputations and financial stability of their organizations. While businesses may not think to take misinformation attacks into account when evaluating the cyber threat landscape, it’s more and more clear misinformation should be a primary concern for organizations. Just as businesses are beginning to understand the importance of being cyber-resilient, organizations need to also have policies in place to stay misinformation-resilient. This means organization need to start taking both a proactive and a reactive stance towards future misinformation attacks.

Perhaps the method of disinformation we are all most familiar with is the use of social media to quickly spread false or sensationalized information about a person or brand. However, there are a number of different guises disinformation can take. Fraudulent domains, for example, can be used to impersonate companies in order to misrepresent brands. Attackers also create copy cat sites that look like your website, but actually contain malware that visitors download when the visit the site. Inside personnel can weaponize digital tools to settle scores or hurt the company’s reputation — the water-cooler rumor mill now can now play out in very public and spreadable online spaces. And finally, attackers can create doctored videos called deep fakes that can create convincing videos of public figures saying things on camera they never actually said. You’ve probably seen deepfakes of politicians like Barak Obama or Nancy Pelosi, but these videos can also be used to impersonate business leadership that are shared online or circulated among staff.

With all of the different ways misinformation attacks can be used against businesses, its clear organizations need to be prepared to stay resilient in the face of any misinformation that appears. Here are 5 steps all organizations should take to build and maintain a misinformation-resilient business:

1. Monitor Social Media and Domains

Employees across various departments of your organization should be constantly keeping their ear to the ground by closely monitoring for any strange or unusual activity by and about your brand. Your marketing and social media team should be regularly keeping an eye on any chatter online about the brand and evaluate the veracity of claims being made, where they originate, and how widespread is the information is being shared.

At the same time, your IT department should be continuously looking for new domains that mention or closely resemble your brand. It’s common for scammers to create domains that impersonate brands in order to spread false information, phish for private information, or just seed confusion. The frequency of domain spoofing has sky-rocketed this year, as bad actors take advantage of the panic and confusion surrounding the COVID-19 pandemic. When it comes to spotting deepfakes, your IT team should invest in software that can detect whether images and recordings have been altered

Across all departments, your organization needs to keep an eye out for any potential misinformation attacks. Departments also need to be in regular communication with each other and with business leadership to evaluate the scope and severity of threats as soon as they appear.

2. Know When You Are Most Vulnerable

Often, scammers behind misinformation attacks are opportunists. They look for big news stories, moments of transition, or when investors will be keep a close eye on an organization in order to create attacks with the biggest impact. Broadcom’s shares plummeted after a fake memorandum from the US Department of Defense claimed an acquisition the company was about to make posed a threat to national security. Organization’s need to stay vigilant for moments that scammer can take advantage of, and prepare a response to any potential attack that could arise.

3. Create and Test a Response Plan

We’ve talked a lot about the importance of having a cybersecurity incident response plan, and the same rule is true for responding to misinformation. Just as with a cybersecurity attack, you shouldn’t wait to figure out a response until after attack has happened. Instead, organizations need to form a team from various levels within the company and create a detailed plan of how to respond to a misinformation campaign before it actually happens. Teams should know what resources will be needed to respond, who internally and externally needs to be notified of the incident, and which team members will respond to which aspect of the incident.

It’s also important to not just create a plan, but to test it as well. Running periodic simulations of a disinformation attack will not only help your team practice their response, but can also show you what areas of the response aren’t working, what wasn’t considered in the initial plan, and what needs to change to make sure your organization’s response runs like clock work when a real attack hits. Depending on the organization, it may make sense to include disinformation attacks within the cybersecurity response plan or to create a new plan and team specifically for disinformation.

4. Train Your Employees

Employees throughout the organizations should also be trained to understand the risks disinformation can pose to the business, and how to effectively spot and report any instances they may come across. Employees need to learn how to question images and videos they see, just as they should be wary links in an email They should be trained on how to quickly respond internally to disinformation originated from other insiders like disgruntled employees, and key personnel need to be trained on how to quickly respond to disinformation in the broader digital space.

5. Act Fast

Putting all of the above steps in place will enable organizations to take swift action again disinformation campaigns. Fake news spreads fast, so an organizations need to act just as quickly. From putting your response plan in motion, to communicating with your social media follow and stake-holders, to contacting social media platforms to have the disinformation content removed all need to happen quickly for your organization to stay ahead of the attack.

It may make sense to think of cybersecurity and misinformation as two completely separate issues, but more and more businesses are finding out that the two are closely intertwined. Phishing attacks rely on disinformation tactics, and fake news uses technical sophistications to make their content more convincing and harder to detect. In order to stay resilient to misinformation, businesses need to incorporate these issues into larger conversations about cybersecurity across all levels and departments of the organization. Preparing now and having a response plan in place can make all the difference in maintaining your business’s reputation when false information about your brand starts making the rounds online.

by admin | Oct 9, 2020 | social engineering

Social Engineering Costs Companies Billions a year. And the bad guys don’t need a lot of fancy technology to get to you. They just need to understand human behavior. Take some time and have your staff work on developing good digital behaviors. It will save you in the long run.

by Doug Kreitzberg | Aug 28, 2020 | Business Email Compromise, Cybersecurity, social engineering

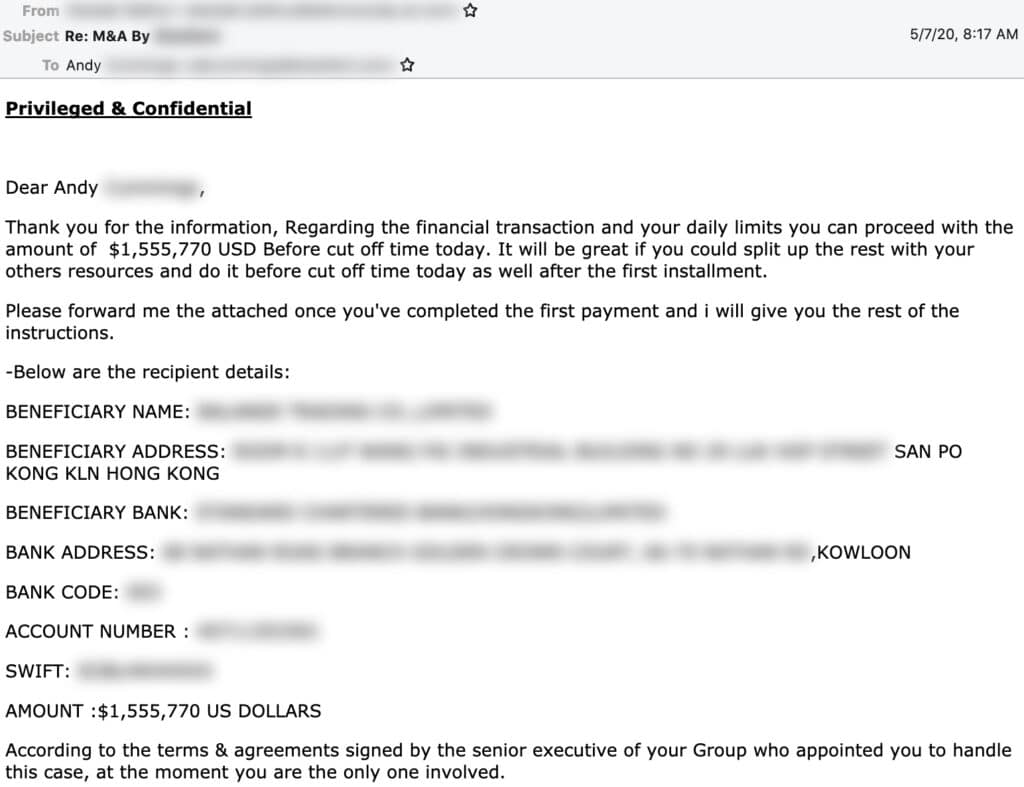

Last week we wrote about the significant cost of business email compromise (BEC) scams compared to other, more-publicized cyber attacks. Now, the cybersecurity firm Agari has published a report showing a new BEC threat emerging — one that is more sophisticated and more costly than what we have seen in the past.

Business email compromise threats are a form of social engineering scams that have been around for a long time. “Nigerian prince scams,” for example, are what people often think of when they think of these types of attacks. However, as technology and modes of communication have gotten more sophisticated, so too have the scammers. Agari’s new report details the firm’s research on a new gang of BEC scammers based in Russia that call themselves “Cosmic Lynx.”

Unlike most BEC scams that tend to target smaller, more vulnerable organizations, the group behind Cosmic Lynx tends to go after gigantic corporations — most of which are Fortune 500 or Global 2000 companies. While larger organizations are more likely to have more sophisticated cybersecurity protocols in place, that doesn’t mean they can’t be tricked, and the payout for successful scams is significantly larger. The average amount requested through BEC is typically $55,000. Cosmic Lynx, on the other hand, requests $1.27 million on average.

How Does it Work?

While the basic’s of Cosmic Lynx’s BEC attacks are pretty standard, the group uses more advanced technology and social engineering tactics to make their scams more successful.

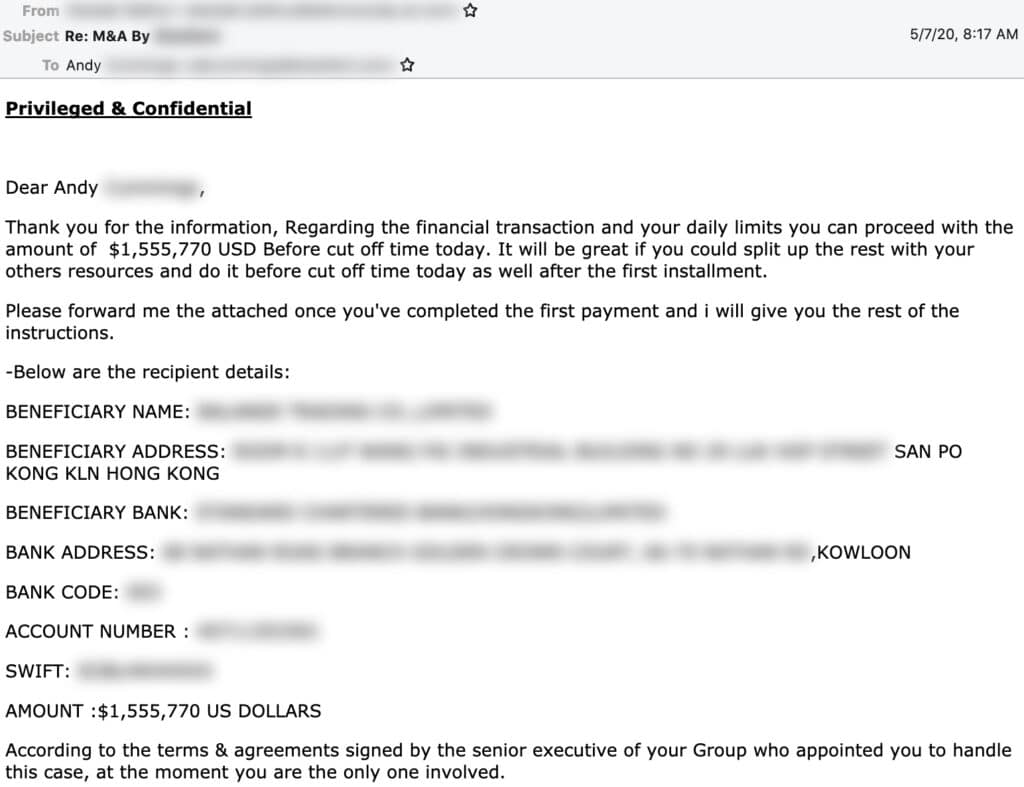

Typically, Cosmic Lynx uses a “dual impersonation scheme” that mimics indidvuals both within and outside of the target of the scams. Moreover, by manipulating standard email authentication settings and registering domains that imitate common secure email domains (such as secure-mail-gateway[.]cc), the group is able to convincingly spoof their email address and display name to look almost identical to a employees within the targeted business. Acting as the CEO, the group will typically send an email to a Vice President or Managing Director notifying them of a new acquisition and referring the employee to an external legal team to finalize the deal and transfer funds.

Cosmic Lynx will then impersonate the identity of a real lawyer and send the employee an email explaining they are helping to facilitate the payment. Of course, organization receiving the funds is actually a mule account — typically Hong Kong-based — controlled by Cosmic Lynx.

Source: Agari

For now, Cosmic Lynx seems to be the only group carrying this new BEC threat, however it is very likely other groups, seeing the amount Cosmic Lynx is raking in, will begin to follow suit. Simply put, the level of sophistication involved in these scams will require businesses to have more sophisticated protections in place to prevent this new threat. While more advanced email filters may help to detect spoofed email addresses, the most effective method to prevent BEC scams is to have strong policies in place to verify payment requests before releasing funds.

by Doug Kreitzberg | Aug 19, 2020 | Artificial Intelligence, Disinformation, Human Factor, Phishing, social engineering

Earlier this month, a study by the University College London identified the top 20 security issues and crimes likely to be carried out with the use of artificial intelligence in the near future. Experts then ranked the list of future AI crimes by the potential risk associated with each crime. While some of the crimes are what you might expect to see in a movie — such as autonomous drone attacks or using driverless cars as a weapon — it turns out 4 out of the 6 crimes that are of highest concern are less glamorous, and instead focused on exploiting human vulnerabilities and bias’.

Here are the top 4 human-factored AI threats:

Deepfakes

The ability for AI to fabricate visual and audio evidence, commonly called deepfakes, is the overall most concerning threat. The study warns that the use of deepfakes will “exploit people’s implicit trust in these media.” The concern is not only related to the use of AI to impersonate public figures, but also the ability to use deepfakes to trick individuals into transferring funds or handing over access to secure systems or sensitive information.

Scalable Spear-Phishing

Other high-risk, human-factored AI threats include scalable spear-phishing attacks. At the moment, phishing emails targeting specific individuals requires time and energy to learn the victims interests and habits. However, AI can expedite this process by rapidly pulling information from social media or impersonating trusted third parties. AI can therefore make spear-phishing more likely to succeed and far easier to deploy on a mass scale.

Mass Blackmail

Similarly, the study warns that AI can be used to harvest a mass information about individuals, identify those most vulnerable to blackmail, then send tailor-crafted threats to each victim. These large-scale blackmail schemes can also use deepfake technology to create fake evidence against those being blackmailed.

Disinformation

Lastly, the study highlights the risk of using AI to author highly convincing disinformation and fake news. Experts warn that AI will be able to learn what type of content will have the highest impact, and generate different versions of one article to be publish by variety of (fake) sources. This tactic can help disinformation spread even faster and make the it seem more believable. Disinformation has already been used to manipulate political events such as elections, and experts fear the scale and believability of AI-generated fake news will only increase the impact disinformation will have in the future.

The results of the study underscore the need to develop systems to identify AI-generated images and communications. However, that might not be enough. According to the study, when it comes to spotting deepfakes, “[c]hanges in citizen behaviour might [ ] be the only effective defence.” With the majority of the highest risk crimes being human-factored threats, focusing on our own ability to understand ourselves and developing behaviors that give us the space to reflect before we react may therefore become to most important tool we have against these threats.

by Doug Kreitzberg | Aug 12, 2020 | Cybersecurity, Phishing, social engineering

By now, most people have heard about the hack of high-profile Twitter accounts that took place on July 15th. To carry out the attack, the perpetrators used a social engineering tactic called “vishing” — short for voice phishing — in which attackers use phone calls rather than email or messages to trick individuals into giving out sensitive information. The incident once again highlights the risks associated with human rather than technical vulnerabilities, and shows Twitter’s shortcomings in managing employee access controls.

On the day of the attack, big names like President Barack Obama, Elon Musk, Jeff Bezos , and Joe Biden all tweeted a message asking users to send them bitcoin with the promise of being sent back double the amount. Of course, this turned out to be a scam and the tweets were quickly removed, but not before the hacker received over $100,000 worth of bitcoin.

According to a statement by Twitter, the attackers gained access to the company’s internal systems the same day as the attack. By using “a phone spear phishing attack,” — commonly known as vishing — the scammers tricked lower-level employees into revealing credentials that allowed them access to Twitter’s internal system. This access, however, did not allow the attackers to immediately access user accounts. However, once inside they were able to carry out additional attacks on other employees who did have access to Twitter’s support controls. From there, the hackers had access to every account on Twitter and could make important changes, including changing the email address associated with an account.

While vishing is not the most well known or most frequent form of social engineering attack, the Twitter hack shows just how dangerous it can be. It’s the one type of attack that requires no code, email, or usb device to carry out. However, there are key protections businesses can use, and that should have been in place at Twitter. First among them is to have explicit policies and safeguards for disclosing credentials and wiring funds. Individual employees should not be allowed to give out information on their own — even if they think they are giving it to a trusted colleague. Instead, employees should have to communicate with a third-party within the company who can verify an employee’s identity before sharing credentials.

Secondly, Twitter needed to have stricter access controls in place, throughout all levels of the company. While Twitter claims that “access to [internal] tools is strictly limited and is only granted for valid business reasons,” this was clearly not the case on July 15th. And even though the employees that were initially exploited did not have full access to user accounts, the hackers were able to leverage the limited access they had to then gain even more advanced and detailed permission rights. Businesses should therefore ensure all employees, even with limited access, have the proper cyber awareness training and undergo simulations of various social engineering attacks.

Lastly, when it comes to vishing, it’s important to use techniques similar to those used to spot other types of scams. When getting a call, the first thing to do is simply take a breathe. This will interrupt automatic thinking and allow you to be more alert. You also need to make sure you are actually talking to who you think you are. Scammer’s can make a call look like it’s coming from a trusted number, so even if you get a call from someone in your contacts it could still be a scammer. That’s why it’s important to focus on what the phone call or voicemail is trying to convey. Is it too urgent? Are they probing for sensitive or personal information about you or others? Is it relevant to what you already know? If anything at all seems off, be extra cautious before talking about that could be damaging.

While you may feel comfortable spotting a phishing attack, hackers and scammers are constantly looking for new ways to trick us. And, as the Twitter hack shows, they are very good at what they do. It’s better to be too cautious and assume you are at risk of being scammed, then think it could never happen to you. Because it can.

by Doug Kreitzberg | Aug 5, 2020 | Business Email Compromise, Cyber Awareness, Cybersecurity, Data Breach, Phishing, social engineering

Earlier this week we wrote about the cost of human-factored, malicious cyber attacks. However, there are also other threats that can lead to a malicious attack and data breach. According to this year’s Cost of a Data Breach Report, the stolen or compromised credentials tied for the most frequent cause of malicious data breaches, and took the lead as the most costly form of malicious breach.

The root cause of compromised credentials varies. In some cases, stolen credentials are also related to human-factored social engineering scams such as phishing or business email compromise attacks. In other cases, your login information may have been stolen in a previous breach of online services you may use. Hackers will often sell that data on the dark web, where bad actors can then use the data to carry out new attacks.

Whatever the cause, the threat is real and costly. According to the report, compromised credentials accounted for 1 out of every 5 — or 19% of — reported malicious data breaches. That makes this form of attack tied with cloud misconfiguration as the most frequent cause of a malicious breach. However, stolen credentials tend to cost far more than any other cause of malicious breach. According to the report, the average cost of a breach caused by compromised credentials is $4.77 million — costing businesses nearly $1 million more than other forms of attack.

Given the frequency of data breaches caused by compromised credentials, individuals and businesses alike need to be paying closer attention to how they store, share, and use their login information. Luckily, there are a number of pretty simple steps anyone can take to protect their credentials. Here are just a few:

Password Managers

There are now a variety of password managers that can vastly improve your password strength and will help stop you from using the same or similar passwords for every account. In my cases, they can be installed as a browser extension and phone app and will automatically save your credentials when creating an account. Not only are password managers an extremely useful security tool, they are an incredible convenient tool for a time when we all have hundreds of different accounts.

Multi-Factor Authentication

Another important and easy to use tool is multi-factor authentication (MFA), in which you are sent a code after logging in to verify your account. So, even if someone stole your login credentials, they still won’t be able to access your account without a code. While best practice would be to use MFA for any account offers the feature, everyone should at the very least use it for accounts that contain personal or sensitive, such as online bank accounts, social media accounts, and email.

Check Past Compromises

In order to ensure your information is protected, it’s important to know if your credentials have ever been exposed in previous data breaches. Luckily, there is a site that can tell you exactly that. Have I Been Pwned is a free service created and run by cybersecurity expert Troy Hunt, who keeps a database of information compromised during breaches. User’s can go on and search the data to see if their email address or previously used passwords have ever been involved in those breaches. You can also sign up to receive notifications if your email is ever involved in a breach in the future.

Cyber Awareness Training

Lastly, in order to keep your credentials secure, it’s important that you don’t get tricked into give them away. Social engineering, phishing, and businesses email compromise schemes are all highly frequent — and often successful — ways bad actors will try to gain access to your information. Scammers will send emails or messages pretending to be from a company or official source, then direct you to a fake website where you are asked to fill out information or login to your account. Preventing these scams from working largely depends on your ability to accurately spot them. And, given the increased sophistication of these scams, using a training program specifically designed to teach you how to spot the fakes is very important.